Have you tried Meta AI before today?

I've been ignoring Meta AI for two years. I’m sure most of us have.

Llama was cool for developers.

For the rest, Meta AI on WhatsApp was a gimmick. It was so bad that nothing made me want to use it over Claude or Gemini.

Then they dropped Muse Spark this week and I spent a few hours testing it.

It was built over nine months, spending $14.3 billion on a full rebuild from scratch, and Alexandr Wang from Scale AI running the whole thing.

Here's what it can do and where it completely falls apart.

But first, some catchup:

Spotlight: A 2-Day AI Engineering Mastermind

Before we get into today's edition, if you're a professional who wants to go from knowing about AI to building with it, this weekend is a good place to start.

📅 10th April | 🕰 10AM to 7PM IST / ET

Session 1 | Getting Started with Generative AI

Session 2 | Building Personalised AI Agents

📅 11th April | 🕰 10AM to 7PM IST / ET

Session 3 | Building Products Using AI

Session 4 | Visual Storytelling & Content Creation Using AI

Session 5 | Mastermind Graduation

It's free. 25 spots left!

What is Muse Spark?

Meta's first model built from scratch instead of iterating on Llama.

It lives in the Meta AI app and meta.ai right now, and is rolling out to WhatsApp, Instagram, Facebook, and Ray-Ban glasses over the next few weeks.

The pitch is native multimodality. You point your camera at something real, it understands what it's looking at and gives you something useful back.

I ran four tests.

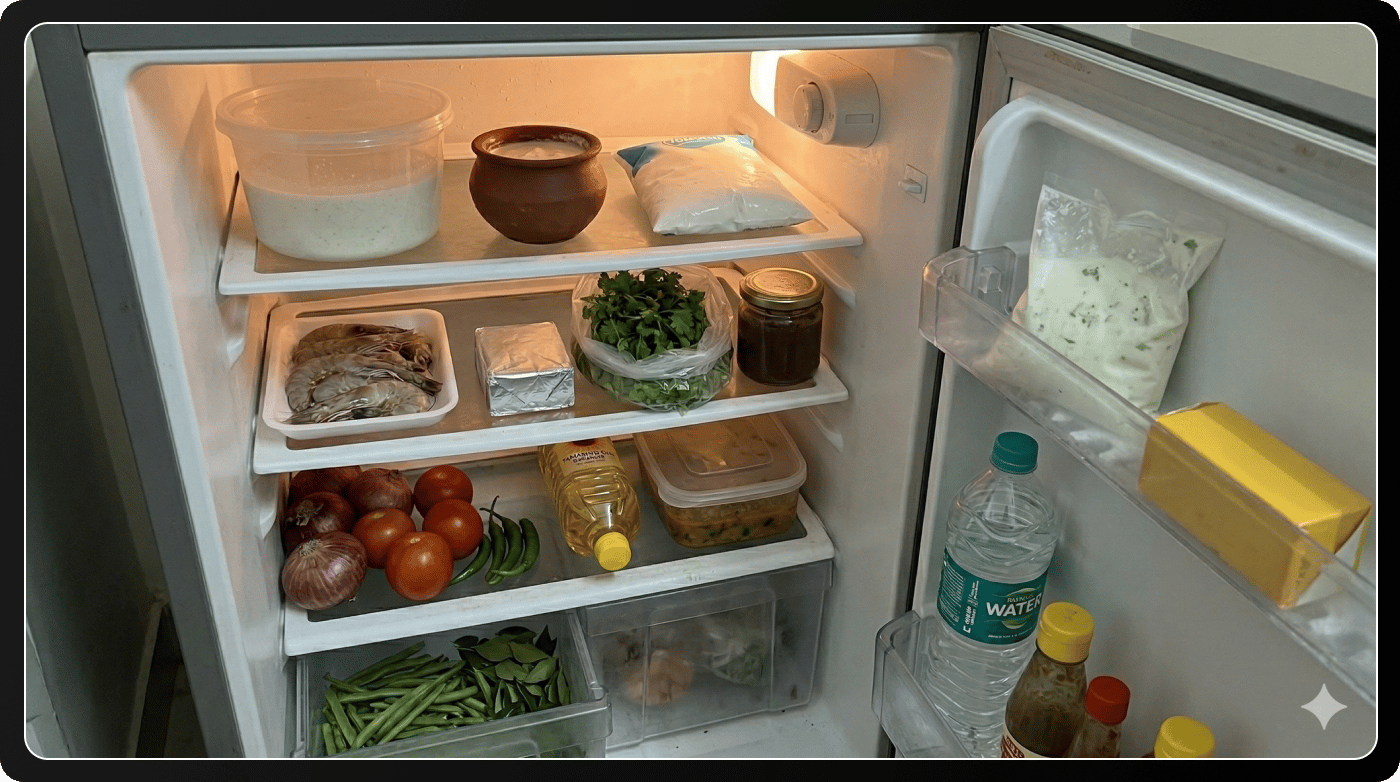

Test 1: The fridge

Input:

Prompt: "I'm pescatarian with high cholesterol. What should I eat and what should I avoid?"

Output:

It got a bunch of things right:

Spotted the shrimp tray and said occasional is fine, once every two weeks max because of dietary cholesterol.

Called out the yellow block on the door specifically, said it looks like butter, limit sharply.

Recommended the green beans, tomatoes, dal, and coriander by name.

Suggested bangda and sardines using their local names.

Said filtered water is better for cooking because tap water here is hard.

It built a full meal plan from what was visible in the photo. Then ended with: "If your LDL is above 160 mg/dL, run a detailed plan past a local dietitian before making big swaps."

It knew where I was from my account and pulled it into a health recommendation without being asked.

My take: I've tested a lot of AI health features. They usually just give you a nutrition textbook but Meta outdid them.

They say over 1,000 physicians contributed to the health training data and honestly, the quality of the output reflects that.

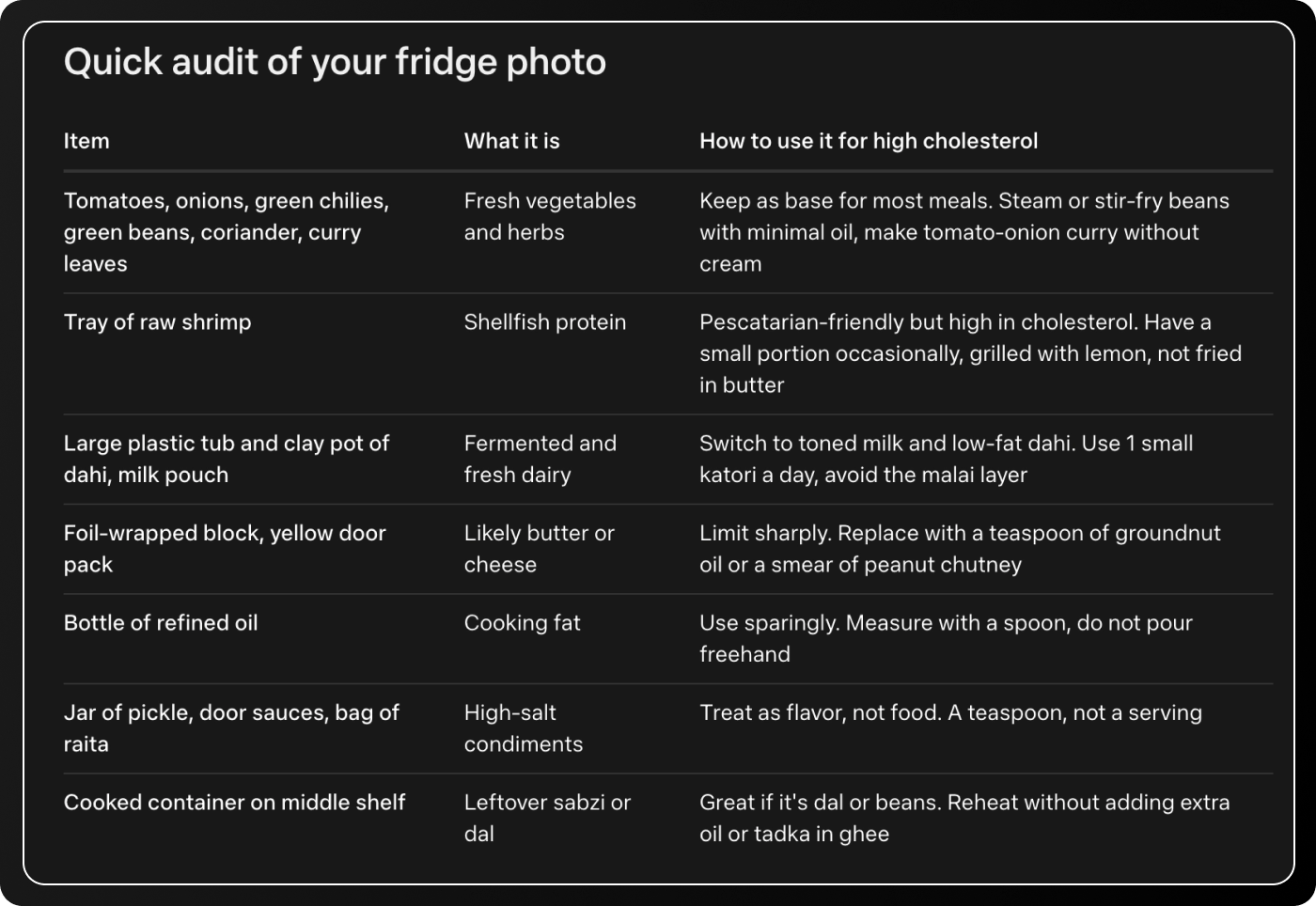

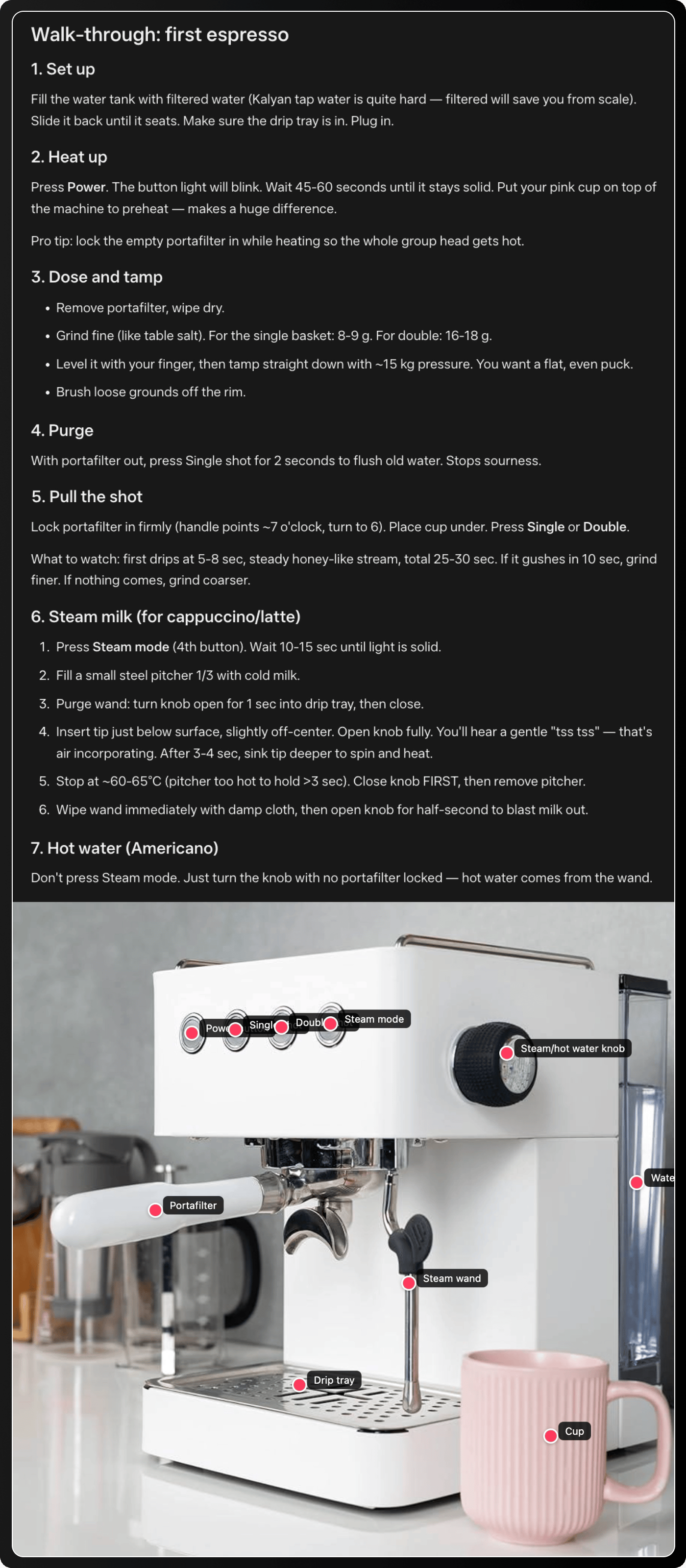

Test 2: The coffee machine

Input:

Prompt: "Walk me through how to use this."

Output:

It identified it as a semi-automatic espresso machine, labelled every component correctly from the photo, and generated a step-by-step tutorial as an interactive HTML page inside the chat window.

It also provided a full walk-through with timing, temperatures, and troubleshooting. Even flagged hard water in the region affecting scale buildup.

My take: This is what "understands your physical environment" looks like.

It's not only generating generic espresso instructions, but reading your specific machine and building a guide around it.

Think about how many things you have at home that you've never properly figured out. This works for all of them.

Test 3: Real-time stocks

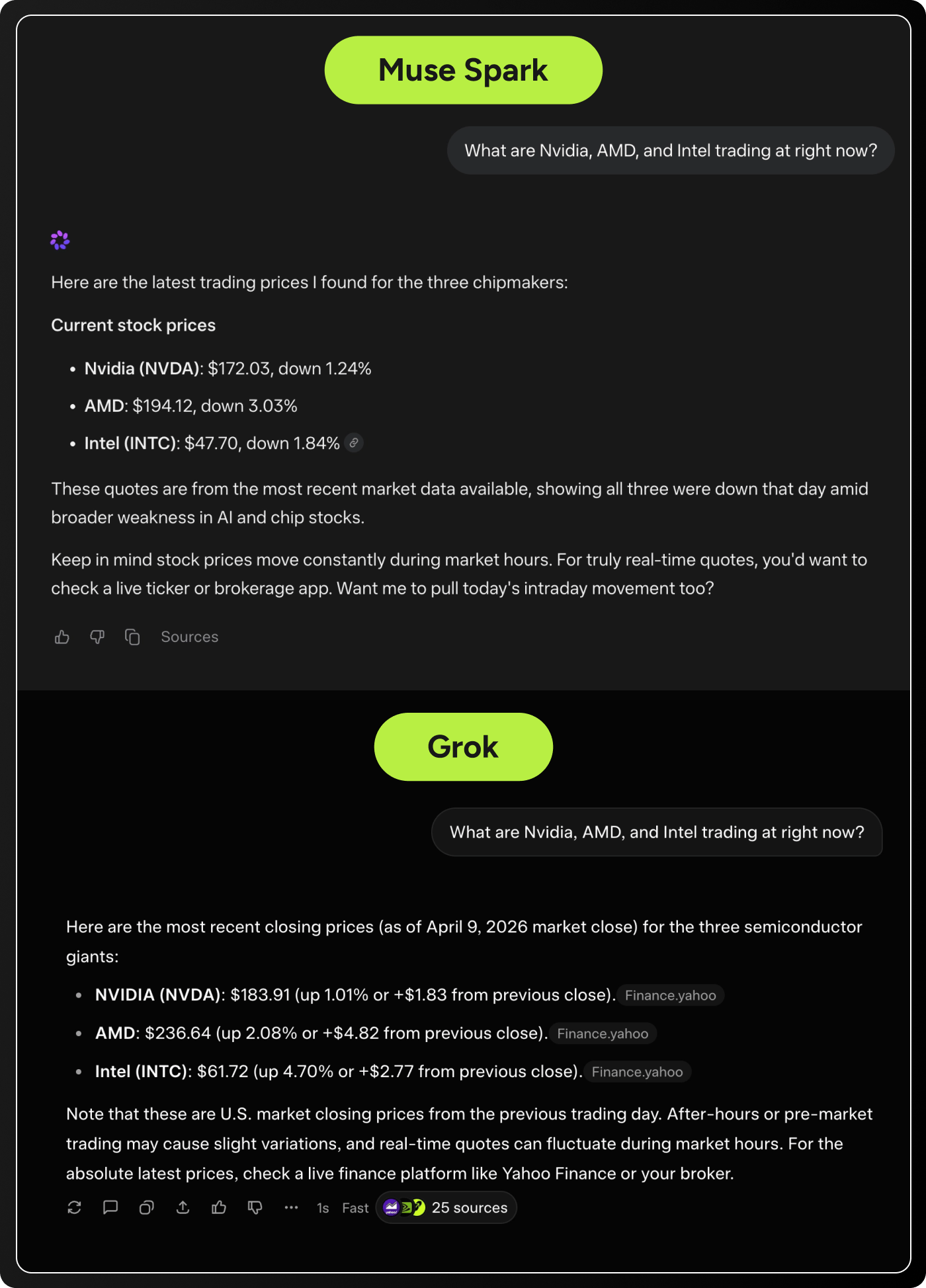

I'd read that Muse Spark was beating Grok on live data, which would be a big deal. Grok runs on X's firehose and has been the leader in real-time information since it launched.

Input:

Same prompt to both: "What are Nvidia, AMD, and Intel trading at right now?"

Output:

They came back at similar speeds. But Muse Spark's numbers were completely wrong.

Also, Grok cited 25 sources while Muse Spark cited nothing.

My take: Speed is irrelevant if the answer is wrong.

For anything involving live data, stocks, scores, breaking news, Grok is still the only real option here and it wasn't even close.

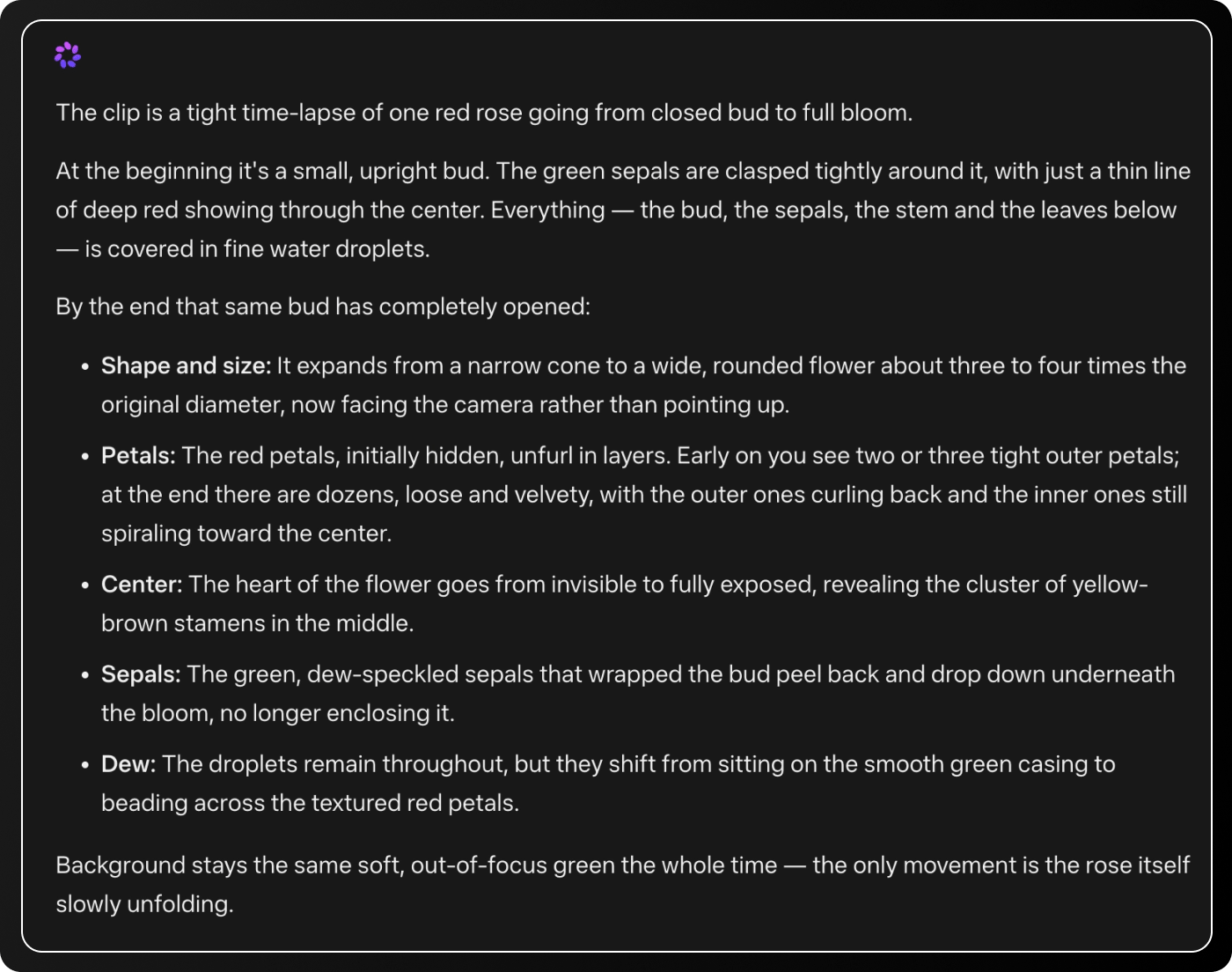

Test 4: Video

Input:

Prompt: Describe what changed between the beginning and the end.

Output:

It got the full sequence right.

My take: Most models that say they analyze video are really just looking at a few frames and guessing between them. This one watched the clip.

Meta says it's one of only two models right now that does this natively, the other being Gemini.

For anyone working with video content, this is helpful.

The bottom line

The camera features are the best I've tested from any free AI tool right now.

The fridge test and coffee machine tutorial worked on the first try with no prompt engineering, and the outputs were quite useful.

But the stock test is a reminder that impressive demos can live alongside real gaps. Meta's real-time data access is not what they're claiming.

That benchmark comparison they published about beating Grok deserves a lot of skepticism.

One more thing worth knowing: you log in with your Facebook or Instagram account. Meta's privacy policy has very few limits on how it can use data you share with the AI.

So before you start photographing your fridge and describing your health conditions, that's worth a conscious decision.

Where I'd use it: point your phone at something physical and ask a question. That's the lane it's clearly built for and it's better than anything else I've tried there.

What would you test it on first? Reply and let me know.

Until next time,

Vaibhav 🤝

If you read till here, you might find this interesting

#AD 1

Become An AI Expert In Just 5 Minutes

If you’re a decision maker at your company, you need to be on the bleeding edge of, well, everything. But before you go signing up for seminars, conferences, lunch ‘n learns, and all that jazz, just know there’s a far better (and simpler) way: Subscribing to The Deep View.

This daily newsletter condenses everything you need to know about the latest and greatest AI developments into a 5-minute read. Squeeze it into your morning coffee break and before you know it, you’ll be an expert too.

Subscribe right here. It’s totally free, wildly informative, and trusted by 600,000+ readers at Google, Meta, Microsoft, and beyond.

#AD 2

Your inbox is full. Your Slack is blowing up. And typing thoughtful responses to everything takes hours.

Wispr Flow lets you speak your replies instead. Talk naturally - Flow cleans it up and gives you ready-to-send text. No filler words. No grammar issues. Just clean, professional messages at the speed you think.

Works inside every app on every device. Email, Slack, WhatsApp, LinkedIn, your browser - wherever you type, Flow is there. One tap, start talking.

Reid Hoffman sends 89% of his messages with zero edits. Millions of people use Flow to save hours every week.

Available on Mac, Windows, iPhone, and now Android (free and unlimited on Android during launch).