Anthropic shared something internally that I keep thinking about: code output per engineer grew 200% last year.

Do you use AI to code?

My first reaction was, great, everyone's shipping more.

But then I sat with it a bit longer and realized the number that nobody was talking about is what happens after the code gets written.

Because it still has to get reviewed by a human who is very much not running at 200% faster.

PRs are getting bigger, there are more of them and the same two senior devs who were already stretched are now the bottleneck for a team generating twice the output it used to.

Cognition has a name for what happens next: the Lazy LGTM problem.

Reviewers scan the diff, don't fully understand what changed, and approve anyway because 40 PRs are open and someone just needs to make a call.

Both Anthropic and Cognition shipped code review tools within weeks of each other to fix this.

I went deep on both this week and what surprised me is how differently they've thought about the same problem.

But first, let's catch up on some tools I think you should check out.

Dev Tools of the Week

1. CodeRabbit — AI code review that integrates directly into GitHub and GitLab. Summarizes PRs, leaves inline comments, and generates review reports. Fast, cheap, and zero setup friction. Good starting point if you want AI in the review loop without committing to something heavier.

2. Greptile — Code review that understands your entire codebase, not just the diff. Flags issues with context from adjacent code, internal patterns, and architectural decisions. Built for teams where "why does this exist" matters as much as "does this compile."

3. Cursor BugBot — Cursor's answer to review automation. Runs on every PR, flags potential bugs inline, and lets you fix them directly in Cursor. If you're already in the Cursor ecosystem, worth switching on today.

Two Tools, One Problem

Before Anthropic deployed their code review tool internally, 16% of their PRs got substantive review comments.

After: 54%.

I nearly stopped there but the more interesting part is how it actually works.

When a PR opens, Claude Code dispatches a team of agents in parallel. They scan the diff, cross-check each other's findings to cut false positives, then return one consolidated review with a single high-signal comment and inline annotations on the specific bugs they found.

Average review time is around 20 minutes.

Large diffs get more agents, small ones get a lighter pass.

From their internal data: PRs over 1,000 lines get findings 84% of the time, averaging 7.5 issues flagged. PRs under 50 lines get findings 31% of the time, averaging about half an issue. Less than 1% of findings were marked incorrect by engineers. That last number matters because a tool that surfaces a lot of false positives just creates another job for someone to dismiss them all day.

One real example from their production: a one-line change looked completely routine. Claude Code flagged it as critical because it would have broken authentication on merge. The engineer said they wouldn't have caught it.

Setup:

Pricing is billed on token usage, averaging $15 to $25 per review scaling with PR size. Available now in research preview for Team and Enterprise plans.

The honest tradeoff is that 20 minutes is slow, and it costs more than lightweight CI tools. The whole pitch is that the depth is worth it, which is easier to believe when Anthropic is running this on their own code and showing you the before and after.

Now Devin Review, which is doing something completely different

Devin Review is trying to make reviewing less painful by rebuilding the interface you do it in. And it's currently free.

The biggest thing it changes is how diffs are organized. GitHub shows changes alphabetically by filename, which when you think about it is one of the least helpful ways to understand what actually happened in a PR. Devin reorganizes everything logically so related changes appear together in an order a human can follow top to bottom. When code was moved or renamed, GitHub surfaces full deletes and full rewrites as if everything was new. Devin detects what was just copied and hides it so you can focus on what actually changed.

The inline chat is the most interesting part. You ask questions about the PR without leaving the review, and Devin answers with full codebase context.

Bug detection works in three tiers: red for probable bugs, yellow for warnings, gray for commentary. You decide what to do with each one.

Access it:

How to actually review and fix code with it:

Once you're in the interface, the workflow is pretty different from what you're used to.

Step 1: Read the reorganized diff top to bottom. Devin groups related changes logically and adds a one-line explanation above each group. You're not hunting through files, you're following a narrative of what changed and why.

Step 2: Use the inline chat when something is unclear. Click into any section and ask "what does this function do" or "is there any validation happening upstream." Devin answers with full codebase context, so you're not just looking at the diff in isolation.

Step 3: Work through the bug flags. Red flags are probable bugs, yellow are warnings worth checking, gray are FYIs. For each one, you can copy the flag to leave as a comment, dismiss it, or pass it to the Devin agent to fix.

Step 4: Hand off fixes to the agent. This is where it gets useful. When you find something that needs fixing, you can assign it directly to Devin from inside the review. The agent fixes it, opens a new commit, and waits for your approval. You review the fix, approve it, and it's merged. The whole loop stays in one place without switching between tools.

The honest tradeoff is that this is a UI tool. It makes reviewing faster but you're still the one reviewing. Where it gets more interesting is if your team already uses Devin as an autonomous coding agent, because the review can hand a flagged issue straight to the agent to fix, you approve, and it's done without leaving the workflow.

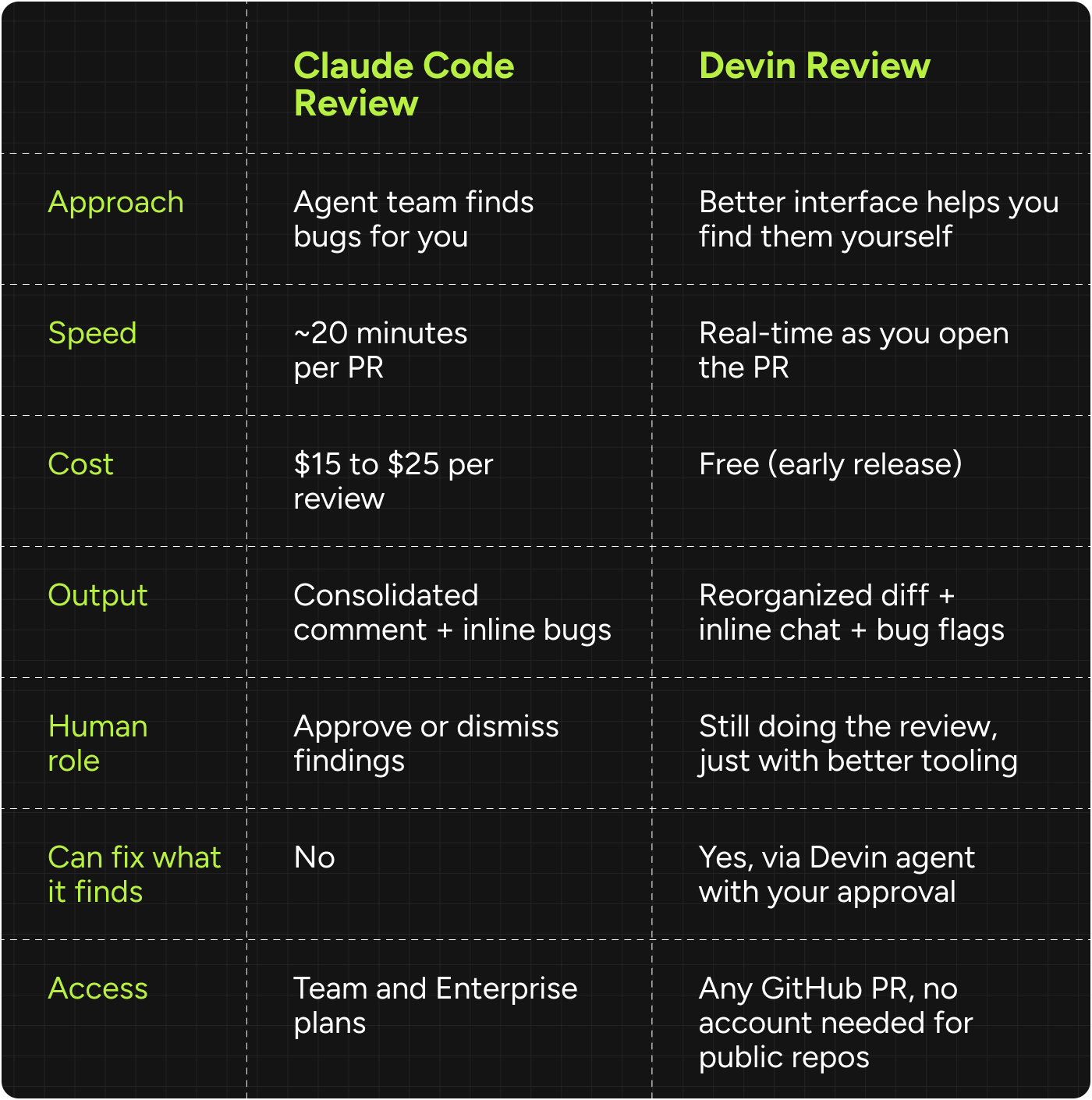

Tldr of my comparison:

Claude Code Review makes sense if you have high PR volume and you want every PR to get a proper read regardless of who's available. The agent doesn't get tired, doesn't rubber-stamp things at 5pm on a Friday.

Devin Review makes sense if the problem isn't finding bugs, it's that nobody can figure out what they're even looking at. Free access and zero friction makes this the obvious first thing to try.

Large teams with budget should honestly consider running both.

They're solving different parts of the same problem.

My take

Code review was ignored for 15 years because GitHub made it functional enough that nobody built a better alternative.

LGTM culture became a joke because everyone knew reviews were mostly performative at scale.

What broke that was agents.

When one engineer generates 10x the code, the review queue breaks in ways you can't fix with better processes.

What I find credible about both these tools is that they were built to solve the builders' own problem first. Anthropic's 54% number is from their own engineering team.

And Cognition built Devin Review because their own customers told them generation was no longer the bottleneck.

Try Devin Review today imo and then figure out whether Claude Code Review's depth justifies the cost for your team.

Until next time,

Vaibhav 🤝🏻

If you read till here, you might find this interesting

#Partner 1

Attio is the AI CRM for modern teams.

Connect your email and calendar, and Attio instantly builds your CRM. Every contact, every company, every conversation, all organized in one place.

Then Ask Attio anything:

Prep for meetings in seconds with full context from across your business

Know what’s happening across your entire pipeline instantly

Spot deals going sideways before they do

No more digging and no more data entry. Just answers.

#Partner 2

How to Write a Week of LinkedIn Posts in 30 Minutes

Taplio's AI Assist analyzes your profile and generates post ideas tailored to your niche.

Pick one, refine it, schedule it.

Done.

Do this weekly and watch your content compound.

7-day free trial + $1 first month with code BEEHIIV1X1.